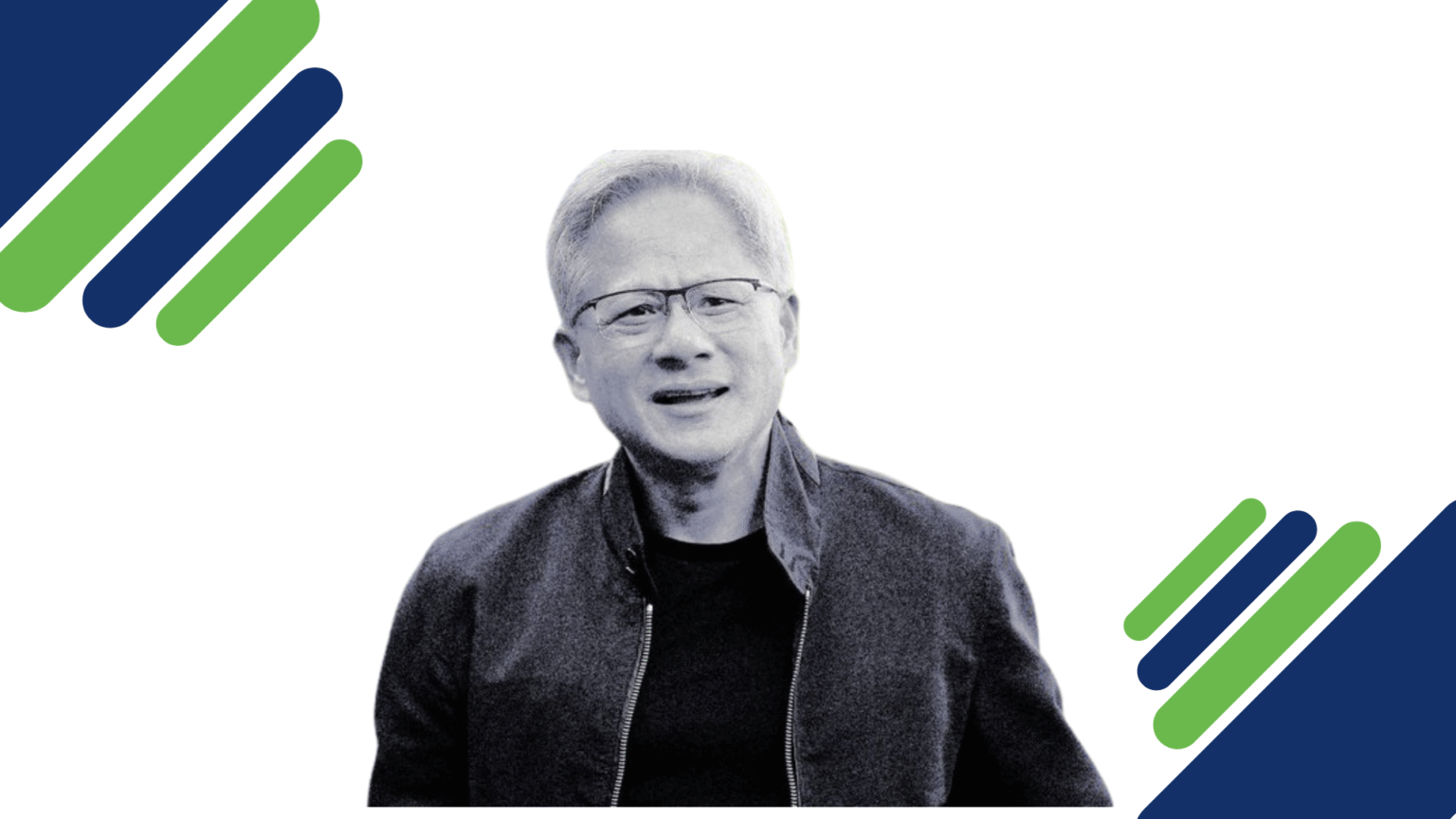

In a recent episode of the Lex Fridman Podcast, Jensen Huang made a statement that immediately sparked debate across the technology world: “I think we’ve achieved AGI.” The comment touches on one of the most contested and often misunderstood ideas in modern artificial intelligence—Artificial General Intelligence (AGI), typically defined as a system capable of performing any intellectual task that a human can do, often at equal or greater levels of competence.

For years, AGI has been treated as a distant milestone—something that might emerge in five, ten, or even twenty years. It has been a subject of speculation not only among researchers and engineers but also among policymakers and the general public. Yet Huang’s assertion challenges this timeline, suggesting that AGI may not be a future breakthrough but a present reality, at least in some form. His perspective reflects how rapidly AI capabilities have advanced, particularly in the domains of reasoning, content generation, and autonomous task execution.

During the discussion, Fridman framed AGI in practical terms, describing it as an AI system capable of effectively doing a person’s job—for instance, starting, managing, and scaling a billion-dollar company. This definition shifts the conversation away from abstract intelligence and toward real-world functionality. If an AI can replicate complex human workflows, make decisions, and adapt to changing conditions, does that qualify as AGI? Huang’s response—“I think it’s now”—suggests that, in his view, current systems are already approaching or meeting this threshold.

Part of the reasoning behind this claim lies in the rise of AI agents and platforms that allow individuals to create autonomous systems tailored to specific tasks. Huang referenced OpenClaw, an open-source platform that has recently gained attention for enabling users to deploy their own AI agents. These agents can perform a wide range of activities, from automating workflows to generating content and interacting with digital environments. The rapid adoption and experimentation surrounding such tools indicate that AI is no longer confined to centralized systems but is becoming increasingly distributed and personalized.

Huang pointed out that people are already using these agents in creative and unexpected ways. He envisioned scenarios where someone might create a digital influencer or a social application driven entirely by AI—something as simple and engaging as a virtual companion, reminiscent of a Tamagotchi, that suddenly becomes a viral success. This highlights a key shift in the AI landscape: innovation is no longer limited to large corporations but is increasingly driven by individuals and small teams leveraging powerful, accessible tools.

However, despite his bold initial statement, Huang also introduced an important nuance. While these AI agents demonstrate impressive capabilities, their impact is often short-lived. Many projects gain traction quickly but fail to sustain long-term engagement or deliver consistent value. This pattern suggests that while AI systems can mimic certain aspects of human intelligence, they may still lack the depth, reliability, and strategic thinking required for sustained success.

Huang underscored this limitation with a striking observation: even if thousands of AI agents are created, the likelihood of them collectively building a company like Nvidia is essentially zero. This remark serves as a reality check, emphasizing the gap between performing isolated tasks and achieving holistic, long-term intelligence. Running a successful enterprise requires not only technical execution but also vision, leadership, adaptability, and an understanding of complex human dynamics—qualities that current AI systems may not fully possess.

This tension lies at the heart of the AGI debate. On one hand, AI has reached a level where it can perform many tasks previously thought to require human intelligence. On the other hand, true general intelligence implies consistency, autonomy, and the ability to operate effectively across a wide range of unpredictable scenarios over extended periods. While today’s AI systems excel in specific domains, they often struggle with long-term planning, contextual understanding, and maintaining coherence in complex environments.

Another important aspect of this discussion is the evolving terminology around AGI. In recent months, many technology leaders have begun to distance themselves from the term, arguing that it is too vague and overhyped. Instead, they are introducing new phrases intended to provide clearer definitions, though these often describe similar capabilities. This shift reflects an attempt to manage expectations and focus on measurable progress rather than speculative milestones.

Huang’s comments can be seen as both provocative and strategic. By declaring that AGI has already been achieved, he challenges conventional thinking and encourages a reevaluation of what intelligence means in the context of machines. At the same time, his subsequent clarification acknowledges the limitations of current systems, suggesting that while we may be closer to AGI than previously thought, we have not yet fully realized its potential.

Ultimately, the question of whether AGI has been achieved depends largely on how it is defined. If AGI is viewed as the ability to perform a wide range of tasks at a human level, then recent advancements in AI agents and large-scale models may indeed represent a significant step in that direction. However, if it is defined as a system capable of independent, sustained, and transformative impact across all domains of human activity, then the journey is far from complete.

What is clear, however, is that the boundaries of AI are being pushed at an unprecedented pace. As tools become more powerful and accessible, the line between human and machine capabilities continues to blur. Whether or not we have truly reached AGI, the implications of current advancements are profound—and they are only just beginning to unfold.